The race to profit from attention incentivizes companies to develop increasingly persuasive techniques – notifications, social cues, personalized feeds, and more – to keep you coming back and monitor your behavior to analyze you as well as they can.

In this resource, you’ll learn:

What persuasive technology is.

Why we’re vulnerable to it.

How to identify persuasive design features on the apps you use.

By understanding persuasive technology, you’ll be able to identify the difference between technology that uses you and humane technology that is useful to you.

Introduction

“I got addicted, always checking my phone, obsessed with keeping my streaks, worrying that someone needed my attention 24/7.”

“I remember one night specifically that was probably when I was at my peak of using [TikTok] when I just caught myself using it for a couple of hours without stopping.”

We tend to think of our phones and computers as tools. We use a hammer to secure a nail; we use our phone to stay in touch. But if our devices are just tools, how do they have such a strong impact on our lives? Are we using our technology, or is it using us?

Think about your own experiences on social media.

- Have you ever looked down at your phone and immediately gotten distracted without knowing why?

- Does Dasani’s experience feeling controlled by social media compare to yours?

- Does Siri’s experience passively using social media compare to yours?

In the Attention Economy, we discussed how social media companies are caught in a race for attention that shapes the apps we use every day. Their products are free to us because we are the products being sold.

Dasani and Siri’s experiences on social media are the outcome of a system incentivized to develop features designed to grab and keep your attention. To understand the role of social media in our lives and our world, it’s important to be able to identify these features and understand where they come from.

What is persuasive technology?

“If something is a tool, it genuinely is just sitting there, waiting patiently. If something is not a tool, it’s demanding things from you. It’s seducing you. It’s manipulating you. It wants things from you. And we’ve moved away from having a tools-based technology environment to an addiction- and manipulation-based technology environment. That’s what’s changed. Social media isn’t a tool that’s just waiting to be used. It has its own goals, and it has its own means of pursuing them by using your psychology against you.”

Platforms like Facebook, Twitter, Instagram, Snapchat, and TikTok are built on persuasive technology, technology created specifically to change its users’ opinions, attitudes, or behaviors to meet its goals.

Technology companies consider factors like motivation, ability, and triggers when they are designing their apps, with the goal of persuading you to spend more time clicking and scrolling. A motivation can be our desire for social connection. A user must have the ability to easily do what the app wants you to. Triggers are the prompting features, like notifications, that keep you coming back.

Take a look at the home screen on your phone.

Your home screen is probably filled with apps marked by red dots with numbers in them. We all know that these red dots are notifications. What we may not have considered is that everything on that screen was put there for a reason. A designer intentionally made the decision to put those dots there, put a number in it, and make it red instead of, say, green, because we instinctively respond with urgency to red. Each app’s red dot is a trigger to open that app.

We feel like we need to address notifications as they stack up. They stress us out and eventually we click back into our apps, where persuasive design pulls us where it wants us to go.

Push notifications – the notifications we receive from our apps when they’re not open – operate with similar principles.

When our push notifications tell us that someone has just tagged us in a photo, we are immediately motivated to see what that photo is and how we look in it. If someone commented on a post we made, it is only natural for us to want to read that comment. If someone we’re interested in begins a livestream, we’re going to want to hop in. Because we are social animals motivated to care what others think of us, these notifications are almost impossible to ignore. A simple tap of that notification conveniently brings you right into the app.

Behind these features are designers, psychologists, and other behavioral science experts working to ensure that their product captures your attention. Thousands of decisions go into when to show you these notifications, which friends you’ll be most responsive to, and what videos to automatically play to get you watching. Every ping, every flick of the thumb is designed to keep you engaged with the app and keep you coming back.

Think about your favorite social media app.

-

How does it pull you in?

-

What are some ways you use the app that are not aligned with your goals for yourself?

What is artificial intelligence and how do social media companies use it?

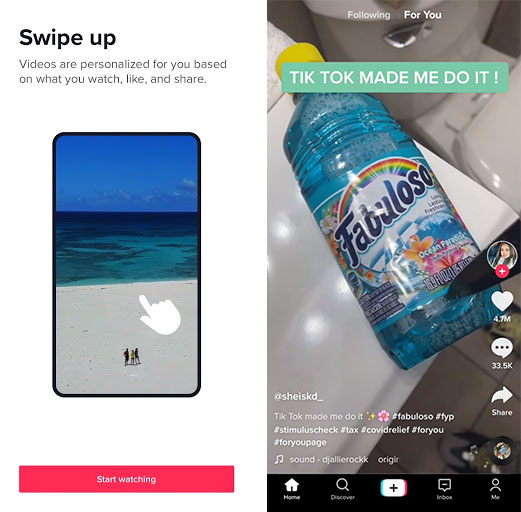

“Tiktok had started recommending weight loss videos and ‘what I eat in a day’ videos to my ‘For You’ page.”

Persuasive design features on social media have us constantly checking notifications, monitoring our “likes,” and endlessly scrolling. These features aren’t merely there to keep you entertained. Instead, they incentivize you to keep coming back and create opportunities to analyze your behavior while you’re there. The more data they have, the more easily they can figure out how to hook you.

Underneath what you see, driving the posts in your feeds, notifications you receive, recommendations, and much more, is artificial intelligence (AI). AI enables computers to mimic some of the ways human minds work, from learning to problem-solving to decision-making. AI is powered by algorithms, which are instructions that tell a computer how to operate.

Algorithms can be used to create self-driving cars or find cures for diseases, but persuasive technology companies specialize in algorithms that influence human behavior because that’s what they sell to the advertisers who are their customers.

For example: ByteDance, the company that owns TikTok and several other apps worldwide, is a persuasive AI company – not a social media company. Their success as a business comes from the sophisticated algorithms their apps are built on. They study how people use TikTok, considering everything about their users from the websites they browse to how they type to keystroke rhythms and patterns. These algorithms have made ByteDance the most valuable startup in the world.¹

Features like following other users, likes, comments, and shares feed information to algorithms so they can capture and keep your attention.

TikTok isn’t addictive just because creators are funny; it’s addictive because it uses one of the most sophisticated persuasive algorithms on the planet to choose videos that will keep you watching. When everyone’s videos get more and more likes, everyone ends up on TikTok far longer than they intended.

Take another look at your favorite social media app.

-

Keeping in mind what you have learned about algorithms, what do you notice about what your feeds are showing you?

-

Why do you think the algorithm decided to show you what it did?

What makes persuasive technology so powerful?

To navigate the complex world around us, our brains have to make fast decisions. We have evolved shortcuts for making decisions, for everything from the way we process information to the way we relate to others around us. These shortcuts are meant to keep us alive and healthy. For example:

We pay more attention to fearful, dangerous stimuli to stay safe.

We seek out sweet and fatty foods for their readily available energy.

We remember things that hurt us more than things that help us so we can predict future consequences.

We tend to follow the popular opinion of those around us to build stronger communities around shared ideas.

These traits served our ancestors well over millions of years. But now, more humans than ever have ready access to food, clothing, shelter, and medicine. We have gone from foraging for calories to being surrounded by cheap sugar, from navigating relationships with a small tribe to navigating online worlds with billions of participants. And yet our psychology is still shaped by these traits.

Persuasive technology is honed to tap into our psychology and push us towards certain behaviors. For example:

Notifications (like vibrations, buzzing, red dots, flashing lights, etc.) mimic naturally occurring signs of danger to pull us into apps.

The possibility of new comments or "likes" keeps us compulsively monitoring for updates, seeking feelings of pleasure and reward.

Design features like infinite scroll (where when you reach the bottom of the page and more content loads automatically) keep us continuously engaged.

Manipulating weaknesses in human psychology isn’t new. Con artists and magicians have long known these vulnerabilities and used them to take advantage of us. Advertisers, too, have exploited deep truths about our psychology to influence our behavior. The food industry has long hijacked our survival instincts, addicting us to fat, salt, and sugar mixed in just the right proportions, profiting massively while throwing our bodies wildly out of balance.

Persuasive technology is up against our human physiology, which changes very slowly, over hundreds of thousands of years. Meanwhile, our technological capabilities have been growing exponentially since the computer was invented in 1946.

Processing power has increased over 1 trillion times between 1956 and 2015 and continues to trend upward exponentially. As it increases, so too does the ability to model and manipulate human minds. Advanced algorithms compare our behavior with the behavior of others like us to discover how to best influence us. Apps then sell that access to companies or individuals who want to influence behavior, opinions, or votes.

Persuasive technology constantly learns more about us and pairs that information with compelling and creative design ideas to influence our behavior more effectively each day.

We can protect ourselves from time to time through self-awareness and willpower, but if we keep putting our brains in competition with these continually improving persuasive technologies, we’re destined to be exploited.

We’ve discussed how persuasive technology is behind all of the social media platforms we use each day. It learns from our behavior and taps into our psychology to build increasingly reliable algorithms that further influence our behavior.

-

Now that you know more about the role persuasive technology plays in our online lives, what concerns do you have about its impact?

-

What are some steps you think individuals can take to better combat persuasive technology’s impact on your life?

Individually and collectively, we can look for ways to reduce the role of these types of technologies in our lives. We discuss this further in the Take Control of Your Social Media Use Action Guide.

What harms are caused by persuasive technology?

“Meanwhile, you get slowly sucked in, spending more and more time on it. I began to be aware that I was believing things that…didn't exist.”

“I got on social media around high school, and I saw people become more distant because of it. There used to be such freedom in the way that we behaved as kids, and now people were obsessing over likes and hearts and everything.”

At the beginning of this Issue Guide, we shared Dasani and Siri’s stories of distraction on social media. We often hear stories like these and think, “Yeah, social media can be really distracting.” Distractions here and there can be annoying, but they’re no big deal. You get distracted, you move on.

But when distraction happens over and over again, it’s part of something bigger. Social media apps on our phones are doing more than distracting us: as in Jasper and Amanda’s stories, apps can change our behavior, what we think, how we feel, and ultimately how we understand ourselves.

Persuasive technology is meant to drive profit for tech companies. But each of the features we’ve discussed has unintended consequences. Persuasive design can’t control us like a puppet on a string, but it can influence us in ways that add up. It teaches all of us – adults included! – habits that can become compulsions and even addictions. And it often creates a funhouse mirror that can shape what we think about culture, politics, and even our own bodies.

We’ve discussed how algorithms determine which topics flood our feeds by looking for patterns in past data to make predictions about what will keep us engaged. Now, imagine an AI algorithm observing human behavior, trying to figure out what humans want. It notices that whenever people drive past a car crash, they slow down and give it their close attention. Clearly, people must be drawn to car crashes, so maybe what they want is highways full of car crashes!

Of course not. People look at car crashes because they need to be aware of a potentially dangerous situation, and because we are naturally curious about the world around us.

As ridiculous as this example sounds, our social media platforms constantly do the same thing. They fill our news feeds with metaphorical car crashes by promoting more provocative and performative content, leaving us in the online equivalent of a traffic jam. They show us not what we want, but the things we can’t help looking at.

Just because we look at or click on something, doesn’t mean it’s what we want, or even what we believe is best for us. More often, our actions online reflect how effectively apps are nudging us toward specific behavior, often engagement.

YouTube’s recommendation algorithms, which determine 70% of what billions of people watch, has found that a great way to keep people watching is to suggest content that is more extreme, more negative, or more conspiratorial. You’ll find keywords like “destroys” and “hates” showing up more often in YouTube’s algorithm.

-

What do you notice about the kind of content that YouTube’s recommendation algorithm finds most engaging?

-

How might amplifying this content change how people see the world?

We will go more in-depth on the harms of persuasive technology in Seeing the Consequences Issue Guide.

Where is all this persuasive technology taking us?

When thinking about persuasive technology, it is helpful to compare it with products built on non-persuasive technologies like Zoom or Notes. These products serve as tools that support you in achieving your goals, rather than working to pull you toward their goals.

Unfortunately, an increasing number of technologies are leaning on the power of persuasion. As algorithms become better at changing your behavior, companies stand to make more money. The profit motive incentivizes companies to add persuasive technology to more types of apps. For example:

Many game apps use loot boxes. Loot boxes are mystery clicks (often costing real money) that yield random virtual rewards. They operate much like scratch lottery tickets and can easily become addicting.

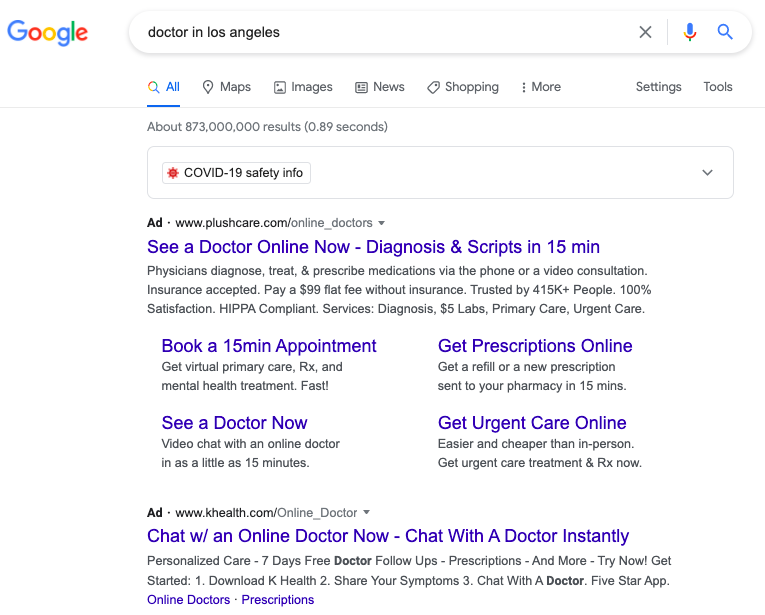

Google Search uses a sophisticated algorithm to find the best matches for what you’re looking for. But what’s above those “best matches”? Paid ads, that look like search results, where advertisers compete to pull you away!

On Google Maps, advertisers can pay for their location to be a “promoted pin” on the map. So now, instead of simply offering users directions, Google Maps offers advertisers the ability to compete for your attention while you’re using the app for another purpose. They use factors like search/browsing history, interests, time of day, and demographics to choose what ads to show.

Even a simple Google search for a doctor is optimized to feed us ads.

The reality is that very few apps exist only as tools. Under the guise of providing entertainment or information, or assistance with directions, persuasive features are designed to sell you to advertisers.

We are on a path to a technology environment where we are surrounded by powerful technology that’s competing to track, influence, and monetize us.

In a notebook, create a simple chart like this one:

App

Persuasive Technique

Notes

Received a push notification that I had three unread messages

Received first thing in the morning, when I usually check social media

Recommended articles about celebrity gossip

I tend to click on these types of articles

Keep track of all the places online you notice persuasive techniques. You can do this now and look around for a short time, keep track over the next 24 hours, or over the course of the week. No matter the amount of time, pay close attention to the role persuasive technology plays in your life.

We’ll reflect on what you find at the beginning of the next Issue Guide.

Go Deeper

On this episode of the Center for Humane Technology’s podcast Your Undivided Attention, Natasha Dow Schüll, author of Addiction by Design, discusses the stunning similarities between the design of gambling technology in Las Vegas and the persuasive technologies we use every day.

On this episode of Your Undivided Attention, AI expert Guillaume Chaslot, who worked on YouTube’s recommendation engine, explains how YouTube’s priority to keep us watching spins up outrage, conspiracy theories, and extremism.